The basic idea of quantum computing is to break through the barriers that limit the speed of existing computers by harnessing the strange, counterintuitive, and powerful physics of subatomic particles.

Why are quantum computers so important for the future?

In a nutshell...exponential processing power:

Quantum bits, or qubits, provide exponentially more computing power.

A 64-qubit quantum computer can process 36 billion billion bytes of information in each step of computation.

Compare this with a classical 64-bit computer, which can process 8 bytes in each step...

Stay up to date with AI

How does a quantum computer accomplish this?

We'll get into more detail later, but for know you just need to know that quantum computers use qubits that can be in superposition, and are entangled.

With their incredibly faster processing power, it's pretty clear quantum computing is the next big evolution in the software industry.

Although the field is advancing rapidly, it's still unclear whether we've reached what's called Quantum Supremacy, or the point where quantum computing can solve problems that classical supercomputers practically cannot.

Some predict that quantum supremacy could happen in the next 6 to 36 months, for example Google's 72 qubit quantum computer - Bristlecone is one of the machines leading charge in terms of the number of qubits.

While your average consumer isn't going to need this much computing power, here are a few use cases of quantum computing:

- Developing novel medical treatments by simulating complex biological systems

- Cryptography - previously secure encryption protocols like RSA can be broken with quantum computers

- Financial analysis of global markets through quantum optimization and several other methods - check out this article for learning more about Quantum Computing and Finance

This guide to quantum computing is organized as follows:

- Introduction to Quantum Computing

- Overview of Classical Computing

- What is Quantum Mechanics?

- What is Quantum Computing?

- What is a Qubit?

- Applications of Quantum Computing

- Summary: What is Quantum Computing?

1. Introduction to Quantum Computing

When the world's first digital computer was created in 1946 it opened up a world of new possibilities. Early computers were only used for limited applications and it wasn't until the first programming language, FORTRAN, was created that real-world problems could be quickly solved by translating the language into machine code.

Just as the first digital computers were far more powerful than current systems, quantum computers will be exponentially faster and more powerful than classical computers.

For decades now, Moore's Law has met computational needs by doubling the number of transistors in an integrated circuit. However, a new breed of applications requires more computational power than ever, particularly in parallel processing.

Quantum computing is still its early days, but the industry is advancing quickly.

With announcements including Google's 72-qubit quantum computer called Bristlecone, IBM's 400 Qubit Plus quantum computer, and plenty of specialist companies like D-Wave, some think we're very close to the age of quantum supremacy.

Unlike classical computers that are based on sequential information processing, quantum computing makes use of three fundamental properties of quantum physics:

- Superposition

- Inference

- Entanglement

These properties are extremely useful for certain tasks, particularly inter-correlated events that are to be executed in parallel.

As you can imagine this will lead to many of new business opportunities, otherwise not possible using classical computers, including quantum machine learning, cybersecurity, chemical and materials designs, as well as medical and pharmaceutical research.

2. Overview of Classical Computing

Before we discuss how quantum computers work, it's useful to think about classical computing. At their core, all of our smartphones, tablets, and desktop computers are made up of very simple components, doing very simple things.

They all perform simple arithmetic operations, and their power comes from the immense speed at which they're able to do this.

Computers can perform billions of operations per second, which has allowed us to build and run complex high-level applications with them.

Of course, there are many tasks that classical computers are perfect for, but there are certain calculations that are just too difficult to process.

Many areas in machine learning such as natural language processing, image recognition, and reinforcement learning have been seeing incredible advances in recent years. Progress in this area, however, usually requires supercomputers that consume huge amounts of space and power.

The question then becomes: is there a fundamentally different way to design computer systems?

Here's a brief overview of conventional computing:

- Computer chips contain modules

- Modules contain logic gates

- Logic gates contain transistors

A transistor is like a switch that can either block or open the way for information coming through. This information is made up of bits - which can be set to either 0 or 1.

Combinations of several bits are used to represent more complex information. Transistors are combined to create logic gates, which still do very simple things.

Combinations of logic gates form meaningful modules, which from Techopedia is:

...a software component or part of a program that contains one or more routines. One or more independently developed modules make up a program. An enterprise-level software application may contain several different modules, and each module serves unique and separate business operations.

In a nutshell, a transistor is just an electric switch. Electricity is just electrons moving from one place to another, so a switch is a passage that can block electrons from moving past a certain point. A typical scale for a transistor is 14 nanometers, which to give you an idea of size is 500 times smaller than a red blood cell.

3. What is Quantum Mechanics?

The first thing you need to know is that at the subatomic level, things are...different.

Quantum mechanics is physics at the atomic and subatomic level. At the quantum level:

- Matter is quantized

- Energy is quantized

- Momentum is quantized

From this live stream:

Quantum mechanics is the body of scientific laws that describe the motion and interaction of photons, elections, and other subatomic particles that make up the universe.

Before we get into how this works, let's think about what we're used to in our experience of reality.

In our universe, we're used to one single truth.

If we flip a coin, it's going to land on either heads or tails, right?

In the quantum realm, however, the laws of physics behave differently and there are multiple truths.

Let's say we flip a coin at the subatomic level, and didn't look at it (which we'll get to why this is important in a second) and I asked you whether it was heads or tails?

The answer to this question is that it's both.

The coin is eternally existing as both heads and tails at the same time.

And we can prove those multiple truths mathematically.

We mentioned that for these multiple truths to exist, we have to not look at the result of the coin flip. In other words, when there is no observer the coin exists in both states. It's important to note that this observer can any type of measurement, meaning it doesn't have to be a conscious human observer, it could be any type of measurement device.

So what happens when we look at the coin?

As soon as we introduce an observer, the coin immediately picks heads or tails, or in other words, it collapses the wave function.

Superposition

Remember how we said if we flip a coin at the subatomic level and don't look at it it's existing in both heads and tails? This is known as superposition.

From the University of Waterloo's Institute for Quantum Computing:

Superposition is essentially the ability of a quantum system to be in multiple states at the same time — that is, something can be “here” and “there,” or “up” and “down” at the same time.

Entanglement

This concept is tough to explain in relation to classical mechanics because it's unlike anything we experience, so don't worry if it doesn't make sense at first.

Here's the definition from Waterloo's IQC:

Entanglement is an extremely strong correlation that exists between quantum particles — so strong, in fact, that two or more quantum particles can be inextricably linked in perfect unison, even if separated by great distances. The particles remain perfectly correlated even if separated by great distances.

Basically, two entangled particles are connected to each other and can change each other state instantly, regardless of the distance between them.

This means if one of the particles is observed, the other one will also be instantly affected by the observer.

It doesn't how far apart they are from each other in the universe, the other particle could be in another galaxy, but as soon as one is changed the other instantly changes state.

The Famous Double Slit Experiment

Now that we have a very basic example, let's look at how this quantum weirdness was actually measured. Since we can't introduce an observer, this is a tricky problem.

In this experiment, they used an electron beam gun that shot an electron at a screen that measured where it landed. On the way to the screen, however, was a wall with two slits that the electron could go through.

As they shot the electron through the double slit wall, there were two logical possibilities: either the electron is a wave or a particle.

It turns out however, that light is both. It is both a particle and a wave.

So how do we describe the particle-wave duality that exists at the quantum level?

We can describe it mathematically using what's known as Schrödinger equation. We won't get into the equation in this article, but all we need to know is that things are different at the subatomic level, and quantum computers can exploit these unique properties.

Now let's discuss how this can be applied to computing.

4. What is Quantum Computing?

Quantum computers approach solving problems in a fundamentally new way.

The hope is that with this new approach to computing we'll be able to solve problems that have been impossible due to physical limitations.

We know that a bit in classical computing can be either 1 or 0.

In quantum computing, a qubit can both 1 and 0.

A quantum computer uses qubits to supply information and communicate through the system. Its encoded with quantum information in both states of 0 and 1 instead of classical bits which can only be 0 or 1

This means a qubit can be in multiple states at once due to superposition.

This is a unique feature of quantum computers - their ability to perform an indefinite number of superposed tasks thanks to their quantum properties and quantum gates.

The quantum gates enable an event to exist in multiple states, meaning all these events can be executed in parallel and simultaneously - as opposed to the binary operations of classical computers.

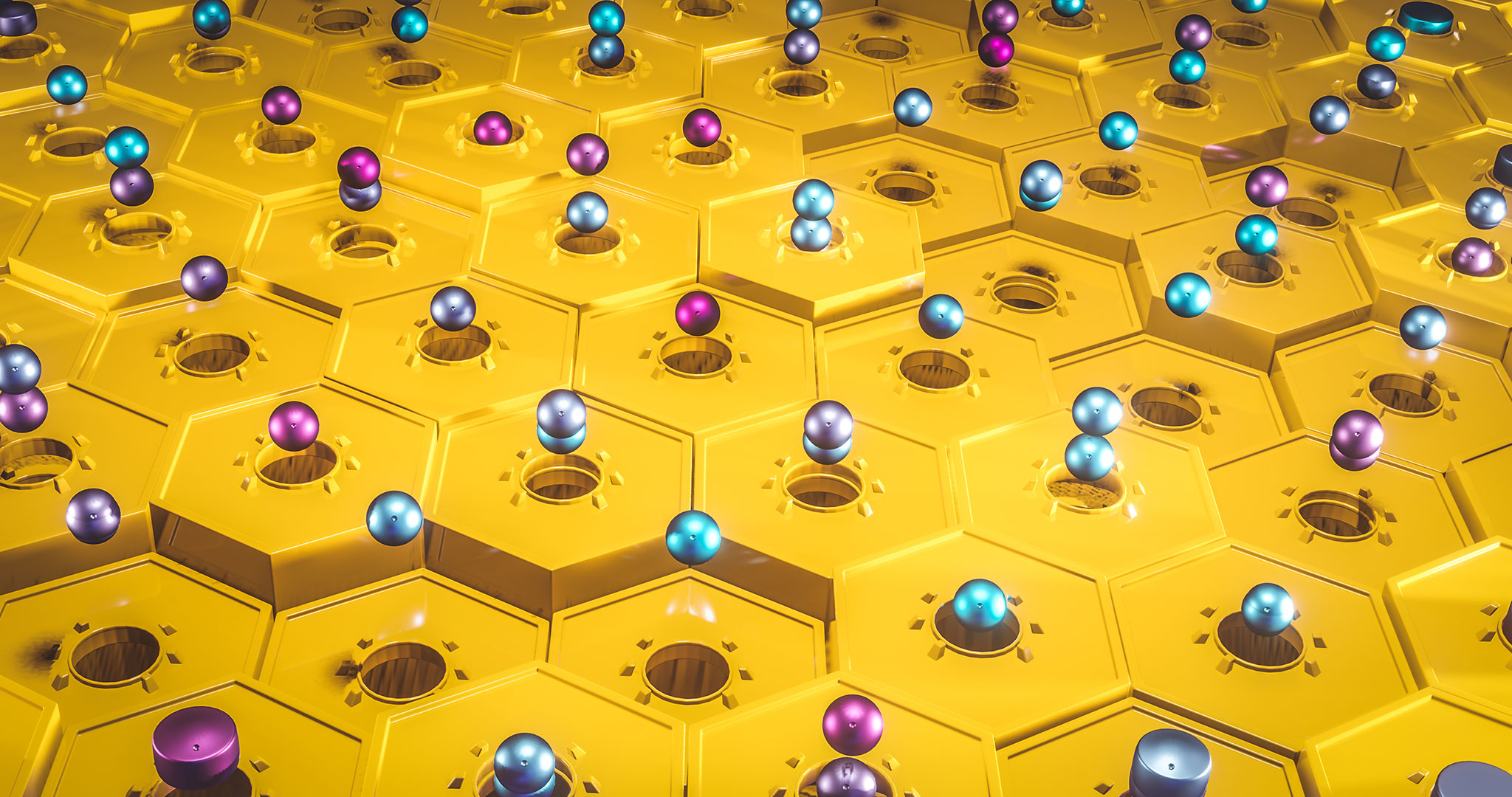

5. What is a Qubit?

A qubit can be any two-level quantum system, such as a spin in a magnetic field, or a single photon. 0 and 1 are the system's possible states - like the photon's horizontal or vertical polarization.

In other words, in the quantum world, a qubit doesn't have to be either 0 or 1 - it can be in any proportion of both states at once.

As we discussed earlier, this phenomenon is known as superposition.

As soon as you test its value, say by sending the photon through a filter, it has to decide to be either vertically or horizontally polarized.

So as long as the photon is unobserved, it is in a superposition of probabilities for 0 and 1...and you can't predict which it will be.

And the instant you measure it, it collapses into one of two definite states - either 0 or 1.

This concept is a change in everything for computing, here's an example to demonstrate why...

- 4 classical bits can be in 1 of 24 different configurations at a time. That's 16 possible combinations, out of which you can use just 1.

- 4 qubits in superposition can be in all of the 16 possible combinations at once. This number grows exponentially with each additional qubit. By 20 qubits you can store over a million possible configurations at once.

Another important concept is qubit manipulation. A normal logic gate gets a set of inputs and produces one definite output. A quantum gate manipulates an input of superpositions, rotates probabilities, and produces another superposition as its output.

So a quantum computer sets up some qubits, applies quantum gates to entangle them and manipulate probabilities, and then measures the outcome by collapsing the superpositions to 0's and 1's.

What this means is that you get the entire collection of calculations that are possible with your setup, all done at the same time. You can only measure one of the results, but by exploiting the properties of superposition and entanglement this can be exponentially more efficient than a normal computer.

Are quantum computers faster than classical computers?

The answer to this right now, is that yes they are...for some problems.

They are not going to replace classical computers any time soon, but they will augment them.

You can think of them as special computers for a specific set of problems.

An example of a situation where a qubit would excel over a classical bit is searching a database. To search a database a normal computer may have to search every one of its entries, whereas quantum computers only need the square root of that time. For large databases, this can be a huge difference.

6. Applications of Quantum Computing

Quantum computers are already available to run certain tasks, all of which require very intensive computation that classical computers cannot handle. From this SlideShare, we see four of the main applications are:

- Cryptography

- Medicine and materials

- Machine learning

- Searching big data

Let's discuss a few applications of quantum computing in more detail.

Quantum Machine Learning

Artificial intelligence companies are in the lead of development and deployment of quantum computing. One of the early applications is to speed up existing machine learning algorithms, and the day will soon come when it creates entirely new classes of algorithms that do not currently exist.

There are 4 known ways that quantum computers can be used for machine learning:

- Optimization

- Sampling

- Kernel Evaluation

- Linear Algebra

An interesting company in this space is D-Wave's Quadrant.ai, which can be used for deep learning with a lot less data.

They build deep learning models to assign labels and generate data which enables us to train accurate, discriminative models using significantly less labeled data.

New Material Synthesis

This means looking at how materials are organized at the atomic level - including their atomic imperfections, how cells are interrelated, and so on.

There is currently wide usage of Variational Quantum Eigensolver (VQE) to calculate chemical properties and design new materials.

Financial Forecasting

Quantum computing is used in Finance for portfolio optimization, scenario analysis, and pricing. The most common models used are the Black-Scholes-Merton model and Monte Carlo evaluation.

Government

Government agencies are early supporters of quantum computing and have been running scenario simulation and analysis, such as policy design and simulation of climate change.

Cybersecurity

The principle of entanglement is being used in quantum key distribution, although no quantum computing hardware is used.

Supply Chain

Quantum computers are well suited to perform traffic simulation, vehicle routing, and optimization. All of this can be used to reduce time to deliver, grow sales, reduce operational costs, and improve customer service levels.

7: Summary: What is Quantum Computing?

Now that we've discussed the key concepts and applications of quantum computing, here is a summary of the classical vs. quantum approaches to computing.

Classical Computing Approach:

- Bit as unit

- Sequence-based

- Distributed: from the cloud to the edge

- Conducted in normal conditions

- Works well for classical tasks - text, video, image, internet, etc.

Quantum Computing Approach:

- Qubit as unit

- Superposition and entanglement are key

- Distributed computing is unlikely, will likely remain only in the cloud

- Requires specific conditions - low temperatures, high magnetic (5-10 Tesla)

- Not optimized for classical tasks

- Optimized for advanced use cases that require massive amounts of parallelism

As we have discussed in this guide, quantum computing is an incredibly powerful and nascent technology.

With quantum supremacy right around the corner, now is certainly a good time to learn more about how they can be used to solve the world's most challenging problems.

This article was originally posted on 2019-03-20 and updated on 2022-12-17.